- Published on

Long-running processes in Serverless Environments

- Authors

- Name

- Alexandro Martinez

- @alexandromtzg

Last week I launched Gumcrm, and I wanted to share some issues I had with long-running functions on Vercel and Supabase.

Requirement - 25,128 DB calls under 1 min

My app syncs Gumroad sales and contacts. Since I'm using my SaaS boilerplate, every sale row needs a lot of Database queries:

- Insert sale row

- Check if the current subscription can add another contact

- Check if the current user has

entity.contacts.create - Grab the next contact folio (CTC-0001, CTC-0002...)

- Insert/update contact row

- Insert/update dynamic row values (custom properties)

- Insert log

- Call

events.rows.createdwebhook event - Insert company if any (repeats 2-8 steps but for company)

- Insert contact tags based on sale

(churned, active....) - Insert

entity.contacts.tagsif didn't exist (for filtering/reporting...)

18 database calls for each sale. I have 1,396 gumroad "sales" (some are $0), that's 1,396 x 18 = 25,128 DB calls.

Problem #1 - Database (Supabase) timeout

On local development (M1 + postgres.app), it took 15 seconds to insert those 1k+ sales + contacts + companies.

On "launch day" (last Friday), I was ready to test in production (Supabase), but the first problem I encountered was:

Error: Timed out fetching a new connection from the pool. Please consider reducing the number of requests or increasing the `connection_limit` parameter (https://www.prisma.io/docs/concepts/components/prisma-client/connection-management#connection-pool). Current limit: 10.

I thought doing what the error message said would fix it:

- Set

connection_limitto 0, 1, 2, 5, 10, 20... - Set

pool_timeoutto 0, 1, 2, 5, 10, 20...

But this led to the next problem.

Problem #2 - Host (Vercel) timeout

It seemed like I "fixed" the problem... connection pool now waited for all incoming DB calls. But now I got a Vercel timeout error:

504 Error 'FUNCTION_INVOCATION_TIMEOUT'.

After a bit of research, I remembered that Vercel's Hobby plans allow for 10-second functions and Pro plans give 60-second functions, but my function lasted more than that, and +60s-functions is only for Enterprise customers.

I tried everything:

- Remove

logs,webhookcalls, companyinserts... - Use

Prisma.createManyfunction (does not return created IDs) - Go through each line of code to fix "N+1 select" problems

- Played more with

connection_limitandpool_timeout

I was really worried that my boilerplate was not production-ready, but then I thought of something in the shower at 4 am.

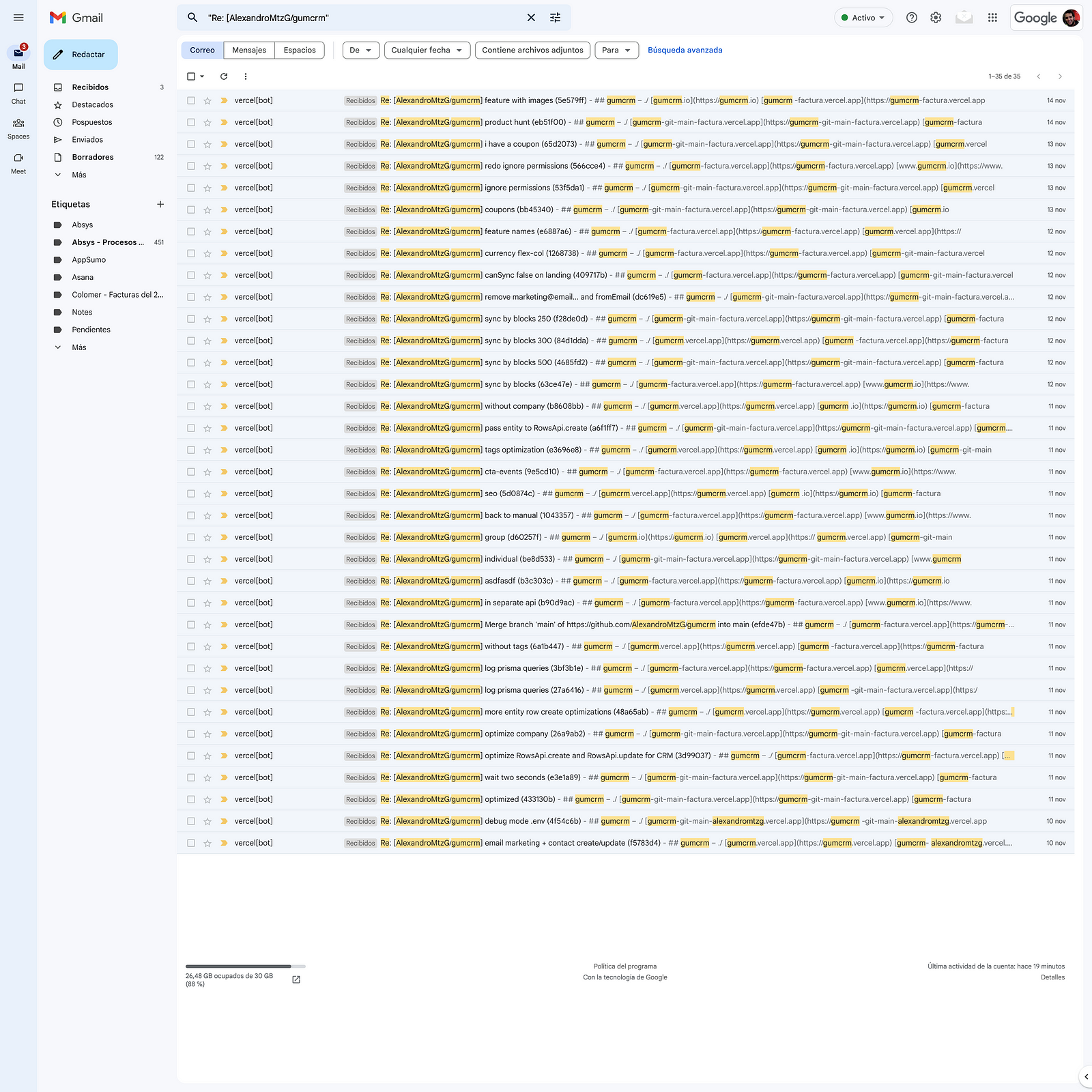

Solution - Batches of 250 rows

It may seem obvious now, but the solution was simple:

Send batches of 250 rows (yes, I tried batches of 100, 200, 500, 1,000...).

This is how it looks now on development (15s) and production (55s).

If you liked this post, follow me on Twitter or subscribe to the Newsletter for more 😃